DS412

Neural Networks and Computer Vision

Faculty Profiles

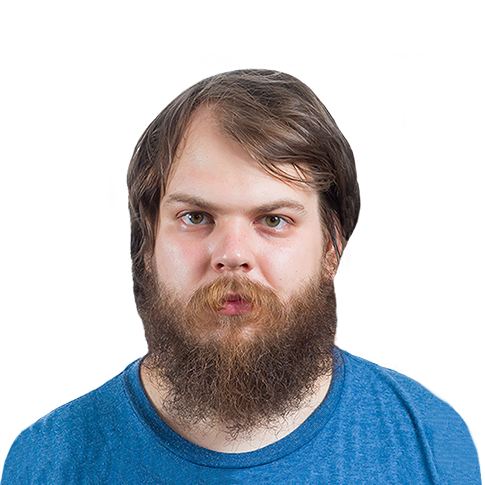

Sergey Nikolenko

Chief Research Officer, Neuromation Head of AI Lab, PDMI RAS

Alexey Davydov

Researcher at Steklov Math Institute, Researcher at Synthesis AI

Course length

Duration

Total hours

Credits

Language

Course type

Fee for single course

Fee for degree students

Skills you’ll learn

Overview

Deep learning, i.e., training multilayered neural architectures, was one of the oldest tools in machine learning but has revolutionized the industry over the last decade. In this course, we begin with the fundamentals of deep learning and then proceed to modern architectures related to basic computer vision problems: image classification, object detection, segmentation and others.

Modern computer vision is gradually progressing from architectures based on deep convolutional neural networks to Transformer-based models, so this is a natural fit that lets us explore interesting architectures, while at the same time staying focused and not going into too wide of a survey of the entire field of deep learning. Computer vision is also a key element in robotics: vision systems are necessary for navigation, localization and mapping, and scene understanding, which are all key problems for creating industrial and home robots.

Learning highlights

- Learn to apply Deep Learning techniques in practice

- Understand the theory behind Deep Learning from basics to state-of-the-art approaches

- Learn how to train various deep neural architectures

- Understand a wide variety of neural architectures suited for real-life computer vision problems

- Gain essential experience with main Deep Learning frameworks

Course outline

15 classes

Neural network basics.

Neural networks: history and basic idea. Relationship between biology and mathematics. The perceptron: basic construction, training, activation functions.

Practice: intro to Deep Learning frameworks

Feedforward neural networks

Feedforward neural networks. Gradient descent basics. Computation graph and computing gradients on the computation graph (backpropagation).

Practice: a feedforward neural network on classic datasets

Optimisation in neural networks

Gradient descent: motivation, problems. Modifications, ideas: momentum, Nesterov’s momentum, Adagrad, RMSProp, adam. Second-order methods.

Practice: comparing gradient descent variations.

Regularisation in neural networks

Regularisation: L1, L2, early stopping. Dropout. Data augmentation.

Practice: Applying different regularisation approaches.

Weight initialisation and batch norm

Weight initialisation: supervised pre-training idea, why straightforward random init fails, Xavier initialisation. Covariate shift and batch normalisation.

Practice: putting everything together.

Convolutional neural networks

Convolutional architectures: idea and structure. Examples. Deconvolution and visualisation in CNNs. AlexNet and VGG. Network in-network and Inception.

Practice: image classification.

CNNs and object detection

Modern convolutional architectures. Residual connections and ResNet. EfficientNet. From classification to object detection. The R-CNN family. Two-stage and single-stage detectors: the YOLO family.

Practice: object detection

Transformers

Another machine learning revolution: the Transformer architecture. Idea, formal description, applications. BERT and GPT families.

Practice: using Transformers in practice

Mid-term test

Mid-term test

Object detection and segmentation

Deep learning for image segmentation: fully convolutional networks, U-Net, instance segmentation with Mask R-CNN. Transformers for detection and segmentation.

Practice: deep learning for segmentation.

Generative models in deep learning

Generative models and neural networks. Types of generative models. Autoregressive deep learning models, WaveNet.

Practice: autoregressive models.

Generative adversarial networks

Generative adversarial networks: idea, DCGAN, AAE, conditional GANs. Wasserstein GANs. Various loss functions in GANs. GANs for image generation.

Practice: AAE on MNIST

Case study: style transfer

Style transfer: problem sets, models for style transfer. GANs for style transfer: from pix2pix to StyleGAN.

Practice: style transfer model.

Diffusion-based models

Basic idea of diffusion-based models. The DDPM and DDIM models. How diffusion models combine with Transformers to get Stable Diffusion, DALL-E 2 and others.

Practice: diffusion-based models

Final exam

Final exam

Prerequisites

Master’s Machine Learning

Python programming experience

At least basic knowledge of Linear Algebra, Probability Theory and Optimisation

Methodology

The course will be organized into three-hour sessions and self-study practical assignments. Sessions will contain both theoretical and practical parts with different ratios depending on the materials.

Grading

Sergey Nikolenko is a computer scientist with vast experience in machine learning and data analysis, algorithms design and analysis, theoretical computer science, and algebra. He graduated from St. Petersburg State University in 2005, majoring in algebra (Chevalley groups), and earned his Ph.D at the Steklov Mathematical Institute at St. Petersburg in 2009 in theoretical computer science (circuit complexity and theoretical cryptography). Since then, Sergey has been interested in machine learning and probabilistic modeling, producing theoretical results and working on practical projects for the industry.

Sergey Nikolenko is currently serving as the Chief Research Officer at Neuromation, leading the Artificial Intelligence Lab at the Steklov Mathematical Institute at St. Petersburg, and teaching at the St. Petersburg State University and Higher School of Economics. Dr. Nikolenko has published more than 170 research papers on machine learning (ICML, CVPR, ACL, SIGIR, WSDM...), analysis of algorithms (SIGCOMM, INFOCOM, ICNP…), and other fields, several books, including a bestselling “Deep Learning” book (in Russian), lecture courses in ML, DL, other fields of computer science (St. Petersburg State University, NRU Higher School of Economics...) and much more. He has extensive experience in managing research and industrial AI/ML projects.

See full profileAlexey Davydov is a computer scientist experienced with algorithm design and machine learning. He received his bachelor degree in physics at Moscow Institute of Physics and Technology and his master degree at St. Petersburg Academic University. His main research interests are developing of competitive scheduling algorithms and usage of synthetic data in deep learning.

He has been teaching at St. Petersburg Academic University, Computer Science Center and St. Petersburg State University since 2012. Alex Davydov currently is a researcher at Steklov Math Institute where he works on theoretical research and at Neuromation where he can apply it to practice.

See full profileApply for this course

Neural Networks and Computer Vision

by Sergey Nikolenko, Alexey Davydov

Total hours

45 Hours

Dates

Jun 08 - Jun 26, 2026

Fee for single course

€1500

Fee for degree students

€750

How to secure your spot

Complete the form below to kickstart your application

Schedule your Harbour.Space interview

If successful, get ready to join us on campus

FAQ

Will I receive a certificate after completion?

Yes. Upon completion of the course, you will receive a certificate signed by the director of the program your course belonged to.

Do I need a visa?

This depends on your case. Please check with the Spanish or Thai consulate in your country of residence about visa requirements. We will do our part to provide you with the necessary documents, such as the Certificate of Enrollment.

Can I get a discount?

Yes. The easiest way to enroll in a course at a discounted price is to register for multiple courses. Registering for multiple courses will reduce the cost per individual course. Please ask the Admissions Office for more information about the other kinds of discounts we offer and what you can do to receive one.